A deep dive into generative AI in finance, covering real-world use cases, enterprise adoption, benefits, risks, and how financial institutions can implement it effectively.

Here’s what you will learn:

- What generative AI in finance actually means (vs traditional AI)

- Real-world examples from leading financial institutions

- Major risks like hallucinations, bias, and data security

- Step-by-step approach to implementing GenAI in finance

Every few years, a technology arrives in finance that separates the institutions paying attention from the ones playing catch-up. The spreadsheet did it. The internet did it. Algorithmic trading did it. Generative ai in finance applications is doing it right now and unlike those prior shifts, this one is moving faster and touching more job functions simultaneously than anything the industry has seen before.

According to McKinsey, generative AI could add up to $340 billion in value annually to the global banking sector alone. The institutions already ahead aren’t waiting to see how the landscape settles. They’re actively reshaping it. The question worth asking isn’t whether your firm should engage with generative AI, it’s whether you can afford to be the one that doesn’t

Table of Contents

Generative AI in Finance and How It Differs from Traditional AI

There’s a lot of noise around this topic, and most of it stems from simple confusion: people use “AI” as a catch-all when they mean very different things. If you’re in finance and want to make good decisions about this technology, the distinction matters more than most articles acknowledge.

Financial institutions have used traditional machine learning for well over a decade — credit scoring models, algorithmic trading engines, fraud detection classifiers. These tools are trained to find patterns in historical data and make predictions.

They’re fast, narrow, and reliable within their lanes. But ask one to write a plain-English explanation for a denied loan, summarise a 180-page regulatory filing, or draft a client response it can’t. That’s not what Generative AI development in finance is built.

| Dimension | Traditional AI / ML | Generative AI (LLMs) |

| Core question it answers | What does the pattern in this data predict? | What should I write, generate, or explain given this context? |

| Data it works with | Structured, numerical — transactions, scores, prices | Unstructured text — documents, reports, client communications |

| Finance use cases | Credit scoring, fraud rules, price prediction, portfolio optimisation | Report drafting, compliance summaries, client Q&A, due diligence |

| Output type | A number, classification, or probability | A paragraph, document, explanation, or analysis |

| Explainability | Often a black box — difficult to explain why | Can generate human-readable reasoning alongside outputs |

| Key limitation | Cannot handle novel, unstructured tasks | Can hallucinate — requires human review on high-stakes output |

| Best suited for | High-frequency decisions at scale with clean, structured data | Drafting, synthesis, explanation, and knowledge retrieval |

Finance needs both, but generative AI unlocks an entirely new category of work that previously could not be automated against anything requiring language, context, and coherent written output. That’s exactly where most finance functions spend enormous human hours.

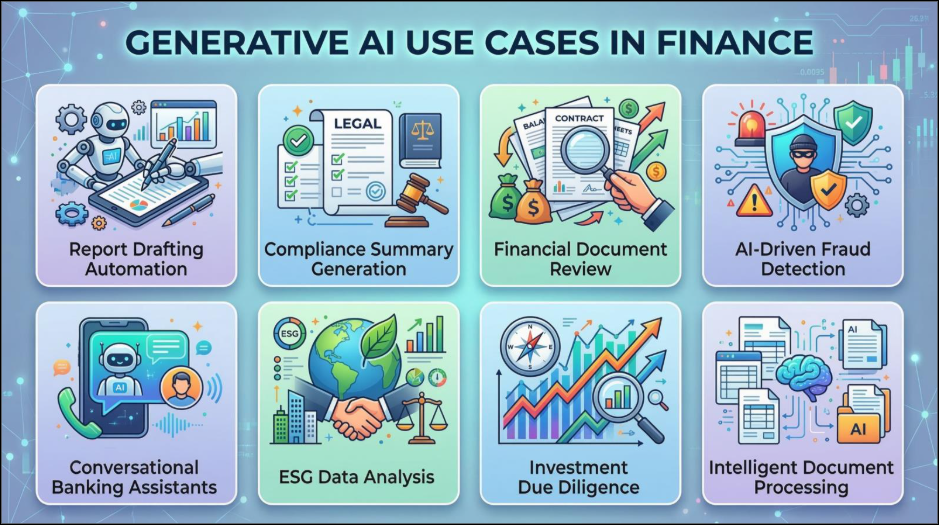

Key Use Cases of Generative AI in the Finance Industry

Let’s move past the theoretical. Across banking, asset management, insurance, and corporate finance, the use cases with genuine traction fall into eight core areas, each with meaningfully different ROI profiles and risk considerations.

Fraud Detection & Financial Crime

GenAI generates synthetic fraud scenarios to train detection models on patterns that haven’t happened yet, closing the gap between when fraudsters innovate and when defenses catch up, also frequently termed as predictive analysis. Stripe deploys GPT-4 to flag suspicious actors in near real time, identifying signals that rules-based systems routinely miss.

Financial Reporting & FP&A

Monthly close commentary, variance analysis, board pack narratives — GenAI pulls metrics from source systems, compares to prior periods, and produces draft commentary ready for human review. Work that consumed two analyst days now takes two hours, with the role shifting from production to judgment.

Compliance & Regulatory Intelligence

Compliance teams face a near-constant stream of regulatory updates. GenAI reads new guidance, identifies what has materially changed versus prior versions, and surfaces specific obligations that require a response — compressing weeks of reading into hours of targeted briefing.

Credit Assessment & Loan Processing

Automated lending’s persistent problem has been explainability. GenAI generates plain-language explanations for AI-driven credit decisions, improving transparency for applicants and satisfying “right to explanation” requirements now embedded in financial regulation globally.

Customer Service & Conversational Banking

The era of rigid, script-bound chatbots is ending. GenAI systems handle account queries, mortgage guidance, and dispute resolution in genuinely useful conversation — maintaining context across sessions. Bank of America’s Erica has surpassed a billion client interactions on this AI chatbot model.

ESG Reporting & Analysis

ESG data is notoriously fragmented — voluntary disclosures in inconsistent formats, ratings using different methodologies. GenAI synthesizes across sources into standardized analysis. BNP Paribas already deploys it specifically for ESG workflows, where data volume has outpaced human processing capacity.

Investment Research & Due Diligence

Private equity deal teams spend enormous amounts of time extracting financial metrics from CIMs and flagging covenant language in legal documents. GenAI compresses that timeline significantly freeing senior professionals for the judgment work that actually creates deal value.

Intelligent Document Processing

The most underrated application. Finance runs on documents such as loan applications, regulatory filings, insurance policies, audit evidence. GenAI’s ability to read, extract, classify, and cross-reference across that document universe is arguably the fastest path to ROI for most institutions, with no custom model development required.

Enterprise Deployments of Generative AI in Financial Services

There’s a meaningful difference between a firm that has “explored” generative AI and one that has put it into production workflows. Here is where the latter group actually stands — with named institutions and documented outcomes.

| Institution | What They Built | Documented Outcome |

| Morgan Stanley | AI assistant on OpenAI giving every financial advisor natural-language access to the firm’s entire research library | Insights that took hours now surface in seconds. Described internally as a knowledgeable colleague who has read everything and forgets nothing. |

| Goldman Sachs | GS AI Assistant deployed firmwide for document summarization, content drafting, and data interrogation; AI coding tools in software teams | 20–40% productivity gain in software development. Goldman simultaneously acknowledged that GenAI will structurally impact white-collar employment at scale. |

| Bank of America | Erica — GenAI-powered virtual assistant handling retail banking interactions across tens of millions of customers | Over 3 billion client interactions handled. Account queries, dispute guidance, and activity alerts at a consistency and scale no human operation could match. |

| Mastercard | GenAI integrated into fraud detection infrastructure to improve accuracy and detection speed on novel fraud patterns | Improved detection of fraud patterns invisible to rules-based systems; materially reduced false positive rates affecting legitimate cardholders. |

| KPMG | Internal GenAI tool — built on OpenAI, deployed within secure firewall — available to every tax consultant in the firm | Projected $12B in additional revenue from the broader AI programmed. Consultants research regulatory positions and draft client communications with AI assistance. |

| Deutsche Bank | Piloting Google Cloud GenAI capabilities to enhance analytical output for financial analysts processing global economic datasets | Reduced time to process complex economic data and produce client-ready insights. Part of a strategic bet that AI-enhanced research is a structurally competitive advantage. |

“Building a similar capability for your institution? See how orangemantra’s AI for BFSI practice approaches financial sector deployments.”

Benefits of Generative AI in Finance Beyond Efficiency

Most discussions of Generative AI benefits in finance converge on the same three words: faster, cheaper, better. That’s accurate but incomplete. The more interesting benefits are structural — they change what’s possible, not just how quickly you can do what you were already doing.

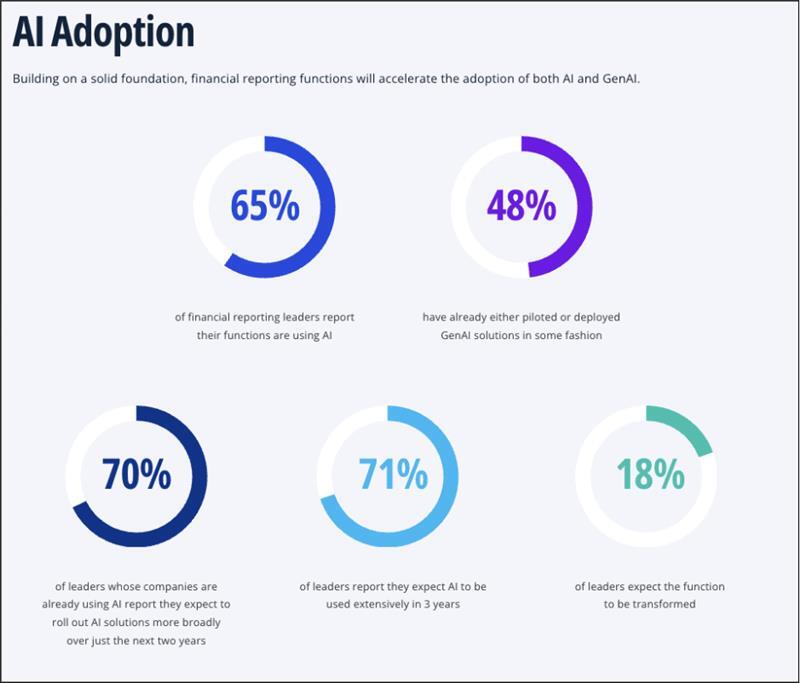

(Image Source: KPMG)

Based on the above findings from KPMG, a majority of financial reporting leaders are using AI and GenAI functions in their reporting workflows, with leaders citing benefits ranging from increased efficiency and reduced burden on staff to more accurate data and cost savings. This is no longer a forward-looking prediction; it’s what organizations that have actually implemented these tools are reporting from the field.

Speed That Changes the Competitive Equation

When an investment bank can complete initial due diligence in a day instead of a week, it changes which deals it can pursue. When a compliance team processes a regulatory update in hours rather than days, it changes how responsively the firm can operate. In finance, speed is not just an efficient metric; it’s a strategic asset. GenAI is redistributing it.

Fewer Errors Where Errors Are Most Costly

Manual data entry and report population have always been a quiet source of material errors in financial output, the kind that surfaces during audits, restatements, or regulatory reviews. GenAI handling the data-to-narrative pipeline systematically eliminates the category of error that comes from human fatigue and repetition. What it introduces — hallucinations — is a different and more visible problem, which is exactly why human review remains non-negotiable.

Capabilities That Used to Require Scale

A mid-market private equity firm can now run the same quality of document analysis on a target’s financials that a bulge-bracket competitor runs. A regional insurer can deploy customer service capabilities that rival those of a national carrier. The technology gap between large institutions and smaller ones is narrowing — though it requires willingness to invest in the right tools and the people who know how to use them.

Senior Expertise Redirected to High-Value Work

This is the benefit most often glossed over. The highest-value finance professionals — senior analysts, experienced risk managers, seasoned CFOs — spend a surprising amount of time on work that doesn’t require their expertise. GenAI doesn’t just make that work faster; it automates the production layer with AI/ML integration.

Fraud Detection That Keeps Pace With Fraud

The arms race between financial institutions and fraudsters has historically favored the attacker — they innovate; defenses react. GenAI’s ability to model novel attack patterns and synthesize behavioral anomalies across large transaction sets starts to tip that balance. It doesn’t end the arms race. But it materially changes the institution’s position in it.

Risks Involved with Generative AI in Finance Applications

The standard structure of a GenAI article in finance is: eight sections of benefits, one section of risks, and one paragraph of conclusion. That structure tells you something about who writes these pieces. Deploying generative AI in a regulated, high-stakes industry without a clear-eyed view of the risks is how organizations create serious problems. Here’s a structured view of what warrants genuine attention.

AI Hallucinations

LLMs confidently produce plausible but factually wrong outputs — fabricated citations, incorrect figures, misattributed data. One hallucinated number in a compliance memo can trigger regulatory scrutiny.

How to Mitigate: Mandatory human review on all consequential outputs; never use AI output directly in regulated documents without verification

Data Security

Feeding client data, trading strategies, or M&A information into third-party LLMs creates competitive intelligence and compliance exposure. Consumer-grade AI tools have no place in professional financial workflows involving sensitive data.

How to Mitigate: Deploy behind organizational firewalls; require contractual data isolation; run pilots on anonymized data first

Systemic Risk

Multiple institutions relying on the same underlying models creates sector-wide single points of failure and “AI herding” — where competing AI systems converge on similar market views, amplifying volatility rather than dampening it.

How to Mitigate: Diversify model providers; raise as a formal risk committee agenda item; monitor evolving regulatory guidance from FCA, SEC, and Basel

Algorithmic Bias

AI trained on historical financial data learns historical biases. Decades of discriminatory lending patterns will be replicated unless training is carefully designed to correct them. Active regulatory concerns in the US, EU, and UK.

How to Mitigate: Audit training data for bias; implement fairness metrics in credit models; document decision logic to satisfy explainability requirements

Black Swan Blindness

LLMs reason by analogy to historical situations. The 2008 crisis and COVID-19 market impact had no useful historical template. AI is least reliable when human judgment is most needed — in genuinely novel, high-stakes scenarios.

How to Mitigate: Never delegate final risk decisions to AI; maintain experienced human judgment as the final layer on novel or high-stakes scenarios

The firms that will get this right are not the ones that deploy AI the fastest. They’re the ones that deploy it with enough human judgment around it to catch what it gets wrong — and enough discipline to know where it shouldn’t be used at all.

Real Question Finance Teams Are Asking About Generative AI

What does this mean for my finance job?

It’s the question every finance professional is sitting with, even when they’re not asking it out loud. And it deserves a more honest answer than the standard “AI won’t replace you — it’ll just augment you” reassurance.

Here’s the clearer picture: the tasks most at risk are not entire jobs, but the components of jobs — specifically the high-volume, lower-judgment tasks that have traditionally formed the majority of work at junior and associate levels.

Goldman Sachs and Morgan Stanley are already running tools that perform data gathering, first-draft generation, and routine client communication well enough to reduce historical demand for large cohorts of junior hires. That’s a structural shift in how finance careers have traditionally been built, and acknowledging it clearly serves people better than reassuring platitudes.

| 🤖 What AI Is Taking Over | 🧠 What Stays Human Work |

| First-draft report and memo generation | M&A negotiation and deal judgment |

| Data gathering and basic reconciliation | Client trust and relationship management |

| Routine client query handling | Novel risk and black swan scenarios |

| Standard document extraction and classification | Ethical accountability in credit decisions |

| Regulatory update summarisation | Reviewing and validating AI-generated output |

| Entry-level due diligence tasks | Strategic FP&A and business partnering |

| Basic variance commentary | Crisis communication and client retention |

What’s equally true: the value of senior judgment, client relationships, strategic reasoning, and ethical accountability is not declining — it’s increasing, precisely because the analytical scaffolding around those decisions is getting automated. The professionals who build durable careers over the next decade will be those who understand both sides of this shift and position themselves accordingly.

Skills Worth Building Now for Financial Professionals

The finance roles most resilient to automation are built around capabilities AI cannot replicate: contextual judgment under uncertainty, trust-based client relationships, and the ability to work intelligently with AI tools rather than passively consuming their outputs.

- AI Fluency & Prompt Design

- Critical Output Evaluation

- Strategic FP&A

- Client Relationship Management

- Data Storytelling

- AI Governance & Risk

- Python / Data Analytics

- Judgment Under Uncertainty

- Business Partnering

Right Way to Begin with Generative AI in Financial Services

The organizations generating real returns from generative AI in finance share one characteristic: they started with a specific problem, not a strategic ambition. “We want to be an AI-first organization” produces expensive proofs of concept that go nowhere. “We want to cut analyst time on monthly variance commentary by 70%” produces a measurable outcome, a clear success criterion, and a foundation to build on.

Start With Your Highest-Friction Workflow

The most glamorous GenAI applications in finance, real-time market intelligence, and autonomous portfolio management are also the most complex and highest risk to get wrong. The fastest ROI usually comes from unglamorous applications: report drafting, document review, compliance summarization. Start where your team feels the most painful, if the team i snot able to figure out, look out for AI consultancy. Prove value. Then expand.

Get Data Governance Right

Decide explicitly what data can flow into systems under contractual protections. In a regulated industry, this is a legal, compliance, and governance decision, not an IT one. Many organizations run initial pilots on anonymized or synthetic data precisely to build confidence before connecting live client or transaction data.

Build Human Review into the Workflow from Day One

Not as an afterthought — as a core design element. AI generates; humans review and approve. This isn’t only about catching errors. It’s about building the organizational knowledge to distinguish where GenAI output is reliable from where it isn’t — which varies meaningfully by task, model, and context. That knowledge is genuinely valuable and takes time to develop.

Measure the Before-and-After

Set a baseline for the workflow you’re improving time, cost, error rate, staff burden. Measure the same metrics after deployment. Pilots that can’t show a clear, quantified improvement rarely survive budget cycles — and arguably shouldn’t. The firms seeing the best results treat these as operational changes with defined business cases, not technology experiments.

Invest in Capability

The limiting factor in most GenAI implementations isn’t the technology; it’s the organisational knowledge to use it well. Finance teams that understand how to frame prompts effectively, evaluate outputs critically, and integrate AI into complex workflows will get dramatically more value from the same tools than those who treat them as black boxes. That capability is built through deliberate training, not passive exposure.

Where This Leaves Us

Generative AI in finance has arrived. The question of whether your institution should engage with it was settled some time ago; the only remaining question is whether you’re engaging with it well.

The firms building genuine competitive advantage are not the ones moving fastest. They’re the ones moving most deliberately — identifying where AI creates real leverage, building the governance to use it responsibly, and developing the human judgment to work alongside it intelligently.

The technology will keep improving. The regulatory frameworks will gradually catch up. The competitive dynamics will continue to shift. What won’t change is the premium on clear thinking, sound judgment, and the ability to ask the right question with or without an AI to help you answer it.

Frequently Asked Questions

What is generative AI in finance?

Generative AI in finance refers to AI systems that can create and summarize financial conten such as reports, compliance documents, and research insights. Unlike traditional AI, it works mainly with unstructured data like filings, emails, and analyst notes.

How are financial institutions using generative AI today?

Banks and financial firms are using it for report drafting, fraud detection support, compliance summarization, customer service automation, and investment research. Many institutions also use it to process large volumes of financial documents faster.

Is generative AI safe to use in financial services?

It can be safe when implemented with strong governance. Financial firms typically use secure environments, restrict sensitive data access, and require human review for critical outputs to reduce risks such as hallucinations or data leaks.

Will generative AI replace finance jobs?

Most experts believe it will automate repetitive tasks rather than entire roles. Finance professionals will still be needed for judgment, client relationships, strategic decisions, and validating AI-generated outputs.

What is the biggest advantage of generative AI in finance?

Its biggest advantage is the ability to analyze and summarize large volumes of financial information quickly. This allows teams to focus more on strategic decision-making instead of manual research and documentation.